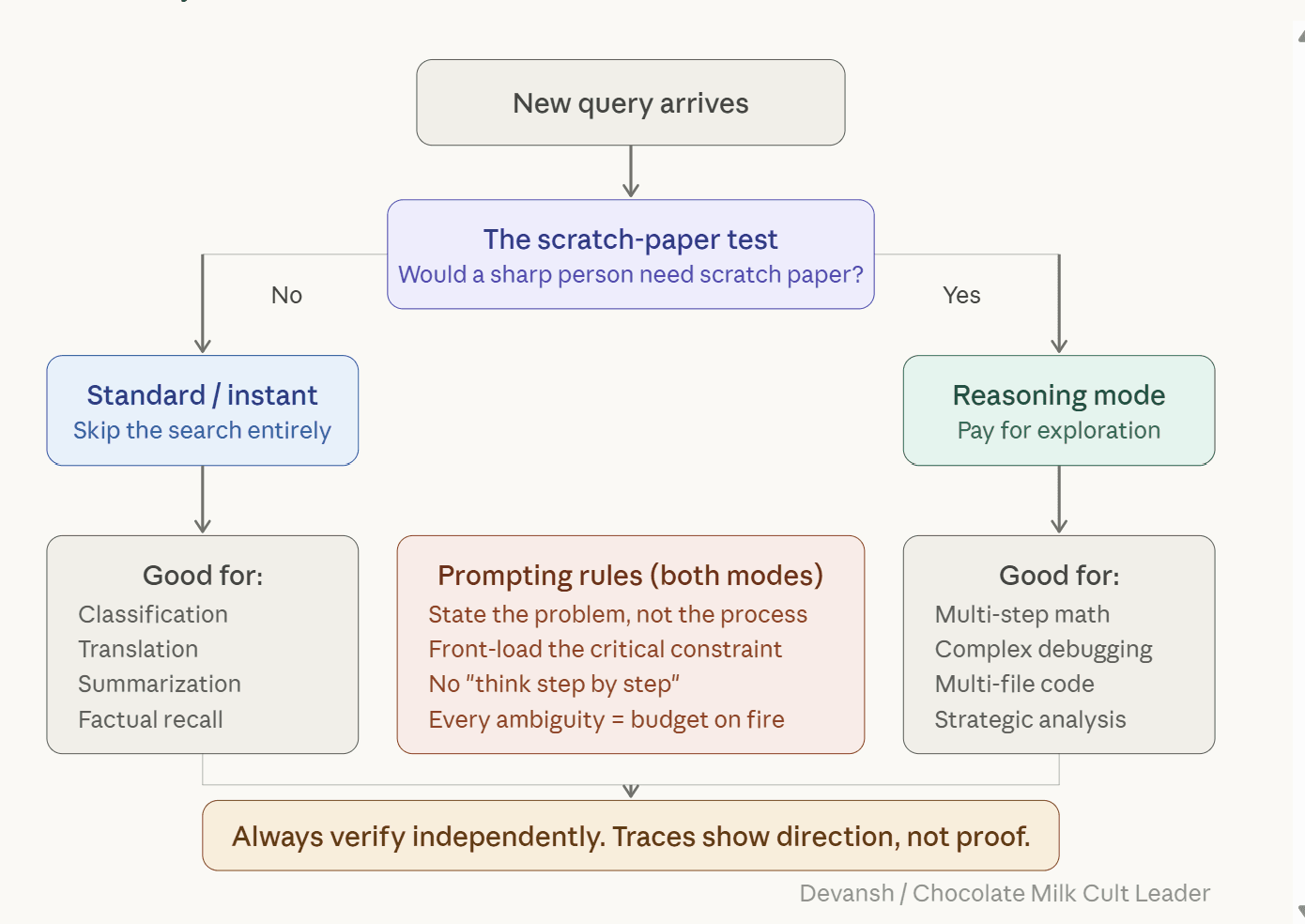

How to Prompt Reasoning Models Effectively

Understanding why Reasoning Models are Different from Normal LLMs and How to Prompt them.

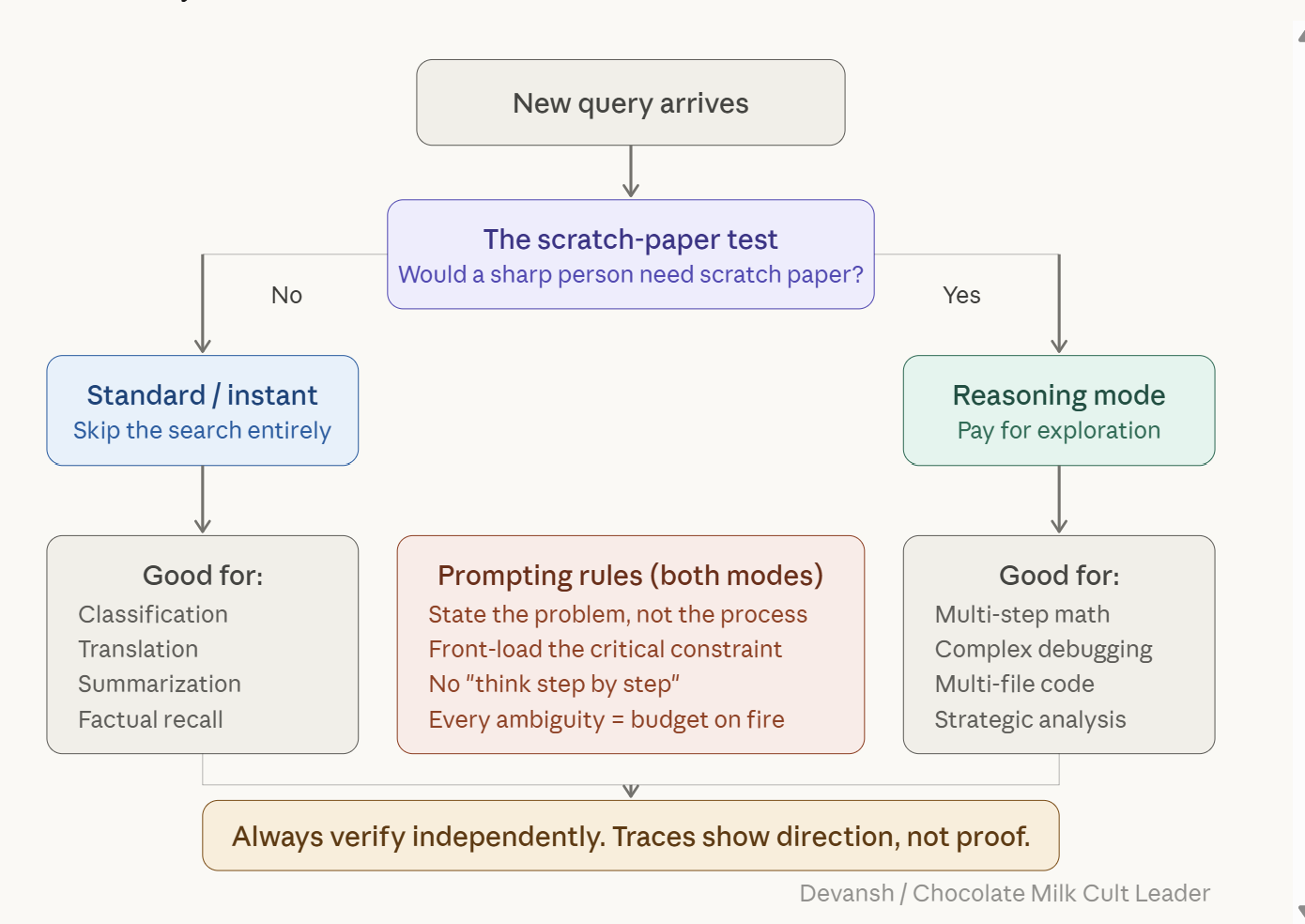

Most people are still prompting reasoning models like it is 2023. That used to help. Now it often wastes money, adds latency, and sometimes makes the answer worse.

A study from Wharton’s Generative AI Lab tested 198 PhD-level questions across biology, physics, and chemistry. Chain-of-thought instructions — the single most popular prompting technique since 2022 — bought 2.9 to 3.1 percent accuracy on reasoning models while adding 20 to 80 percent latency. On Gemini Flash 2.5, chain-of-thought made results worse. Negative 3.3 percent. You’d have gotten better answers by not trying to help. “Mind Your Step (by Step)” (COLM 2025) went further: on pattern recognition tasks, turning on reasoning mode dropped accuracy by up to 36.3 percent versus a standard model. The technique designed to make models smarter is making the smart models dumber.

The providers know. OpenAI, Anthropic, Google, DeepSeek — all of them explicitly warn against chain-of-thought on reasoning models. But the advice economy runs on lag. Most courses, research, and common tips are focused on the older generation base models, making them outdated for the current paradigm, since base LLMs have a very different post-training and alignment system compared to reasoning LLMs.

In this deep dive, we will combine our conversations with the builders of various AI models, dig into research papers, and compile insights from various practitioners to give you deep insight into the mechanics of reasoning models, why the standard prompting techniques that you’re taught online actually hurt reasoning models, and how you can prompt them better. This article will also give you eight rules grounded in the research that will work across model families and architectures so you can apply these insights to the model of your choice.

^^A preview of what you’re getting today.

This article is written for the technical layman, with no deep AI or Software Engineering skills required. All you’ll need is an attention span, a desire to learn, and a willingness to experiment and internalize knowledge. If you match that, this article will help you skyrocket your productivity and do higher-quality work in less time.

Want to see how Irys applies these ideas in practice?